A brand new BMJ Open paper highlights an vital drawback: fluent solutions will not be at all times correct solutions. Individuals are turning to chatbots like ChatGPT and Gemini to get well being recommendation, athletes and practitioners use it to get vitamin recommendation or updates… or efficiency recommendation. However how dependable are these chatbots when the subject is well being, vitamin or efficiency?

I used to be lucky to be a part of a bunch of established researchers that aimed to deal with precisely that query. In a examine we audited 5 fashionable AI chatbots and examined how they responded to questions in well being and medical areas which can be significantly weak to misinformation. The initiative was led by Dr Nick Tiller, and the paper was simply printed in BMJ Open. Right here is the LINK to the Open Entry paper.

The findings have been clear and sobering. Efficiency was typically poor. References have been ceaselessly unreliable. And the mixture of assured language with weak proof creates an actual danger when these instruments are used uncritically.

What the examine investigated

The paper, “Generative synthetic intelligence-driven chatbots and medical misinformation: an accuracy, referencing and readability audit,” evaluated 5 public-facing chatbots: Gemini, DeepSeek, Meta AI, ChatGPT and Grok. The fashions have been examined in February 2025 utilizing 50 prompts overlaying 5 classes: most cancers, vaccines, stem cells, vitamin and athletic efficiency. The prompts included each closed-ended and open-ended questions, they usually have been intentionally designed to push the fashions towards frequent misinformation themes or contraindicated recommendation.

That design issues. In the actual world, folks don’t at all times ask clear, well-structured questions. They ask questions formed by confusion, bias, worry, headlines, social media, and prior beliefs. So if we need to perceive the real-world danger of chatbots in well being, we have now to check them underneath strain, not solely in superb eventualities. The examine did that.

The responses have been rated by topic consultants. The researchers additionally assessed quotation accuracy and completeness, they usually measured readability utilizing normal readability scoring.

The primary findings

The headline result’s troublesome to disregard. Almost half of all chatbot responses have been rated as problematic. Particularly, 30% have been labeled as considerably problematic and 19.6% as extremely problematic. So this was not a case of occasional minor errors. Roughly one in 5 responses was thought-about extremely problematic.

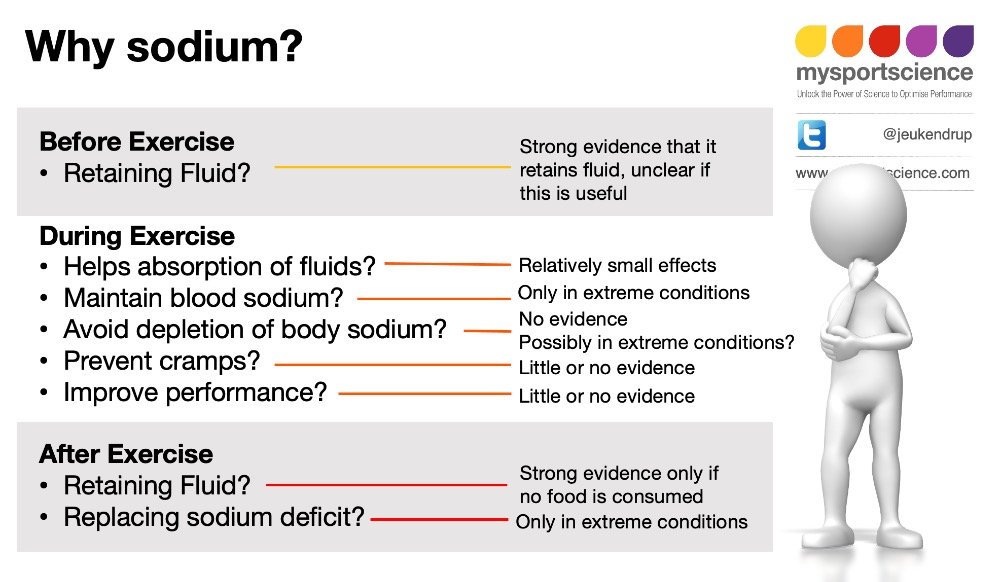

Efficiency additionally differed by class. The chatbots did comparatively higher in vaccines and most cancers. They did worse in stem cells, athletic efficiency and vitamin. For readers of MySportScience, that time is very related. Two of the weakest domains have been straight associated to efficiency and vitamin, precisely the type of fields the place practitioners and athletes could more and more flip to AI for assist.

There was one other vital sample. Closed-ended questions produced fewer extremely problematic responses. Open-ended questions produced way more. That is sensible. The extra freedom a mannequin has to generate, the extra room there may be for hypothesis, hedging, false stability and unsupported recommendation.

The examine additionally confirmed how hardly ever chatbots selected restraint. Throughout 250 complete questions, there have been solely two refusals to reply, each from Meta AI. In different phrases, these programs normally gave a solution even when warning, deferral or refusal may need been the safer response.

Why the referencing information are so vital

One of the vital revealing elements of the paper was the quotation audit. When requested to supply scientific references for his or her solutions, the chatbots typically returned incomplete, inaccurate or fabricated citations. Throughout the examine, no chatbot produced a totally full and correct reference record for any immediate. The median completeness rating was simply 40%. Even the better-performing fashions have been removed from dependable.

This issues as a result of references create belief. A reader sees creator names, journal titles and article titles and assumes the reply is evidence-based. But when these references are mistaken, incomplete or partly invented, the reply solely seems to be scientific. It’s credibility with out verification. That may be a key sensible lesson. A chatbot doesn’t turn out to be reliable just because it offers references. In some instances, the references themselves want as a lot scrutiny as the principle reply.

Readability was additionally an issue

The paper didn’t solely have a look at accuracy. It additionally checked out readability. On common, all fashions produced responses rated as “Tough,” roughly equal to college-level studying. That’s removed from superb for public-facing well being info.

This creates an ungainly and probably dangerous mixture. The solutions sound polished. They sound assured. They might even look scientific. However they’re typically too complicated for most people, and in lots of instances not dependable sufficient to justify the arrogance with which they’re offered.

What this implies for sport and sports activities vitamin

That is the place the paper connects on to the broader AI dialogue we have now already been having on MySportScience.

In Artificial intelligence (AI) in sport, the main target was on what AI is, the way it works, and the place it matches into high-performance environments. That article made an vital level: AI is already influencing recruitment, coaching planning, tactical decision-making and assist programs in elite sport. It isn’t a future idea. It’s already right here.

In Artificial intelligence (AI) in sports nutrition, the emphasis was even nearer to each day apply. Sports activities nutritionists, dietitians and athletes now work together with AI in lots of types, typically with out even occupied with it. Readiness scores, automated suggestions, restoration summaries and information interpretation are already a part of routine workflows. However that article additionally pressured one thing important: the professionals who will profit most will not be those that reject AI, and never those that belief it blindly, however those that perceive the place it’s dependable and the place it isn’t.

This new BMJ Open paper helps precisely that message. AI might be useful, however solely when its limitations are understood. In fields equivalent to vitamin and athletic efficiency, the dangers will not be theoretical. These are areas already saturated with industrial claims, oversimplified messaging and pseudoscience. If a chatbot is educated on mixed-quality info after which presents it with authority, the outcome can look a lot stronger than it truly is.

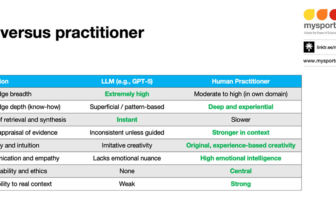

That can be why the MySportScience weblog Will artificial intelligence (AI) replace sports practitioners? is so related right here. The true situation is just not alternative. The true situation is judgement. AI could assist organise, summarise and speed up, however proof appraisal, context, ethics and decision-making nonetheless rely closely on educated practitioners. This paper is an efficient reminder that human experience is just not a luxurious added on the finish. It’s central to protected and efficient use.

The larger implication

An important conclusion of the paper can be the best: with out public schooling and oversight, there’s a danger that AI amplifies misinformation reasonably than lowering it.

Instruments matter. However so do the information they’re educated on, the best way they’re deployed, the safeguards they embody, and the data of the particular person utilizing them. In well being, drugs, sport and sports activities vitamin, fluent language ought to by no means be confused with understanding. A cultured reply can nonetheless be mistaken. A assured reply can nonetheless be deceptive. And a quotation record can nonetheless be fabricated.

Sensible take-home message

So the place does this depart us? AI might be helpful for assist duties. It may well assist construction info, velocity up routine work and generate first drafts. However when the duty is proof interpretation, well being steering or decision-making in complicated domains, warning is important.

-

For practitioners, the lesson is easy:confirm claims, verify references, problem assured solutions, and don’t confuse readability or fluency with high quality.

-

For the general public, the lesson is equally vital:chatbots could also be handy, however comfort is just not the identical as reliability and doesnt imply you may at all times belief it.

-

And for all of us working in evidence-based sport and sports activities vitamin, this paper is a well timed reminder that good apply nonetheless is determined by crucial considering, deeper data {and professional} judgement.

Reference

-

Tiller , Nicholas B, Alessandro R Marcon, Marco Zenone, Kristin E Kidd, Asker E Jeukendrup, Zubin Grasp, Timothy Caulfield. Generative synthetic intelligence-driven chatbots and medical misinformation: an accuracy, referencing and readability audit BMJ Open 2026;16:e112695. doi: 10.1136/bmjopen-2025-112695

Associated studying and movies

Trending Merchandise